A potential client sent me an expert guide they wrote after interviewing senior software engineers. They included the case studies too, even a proprietary methodology.

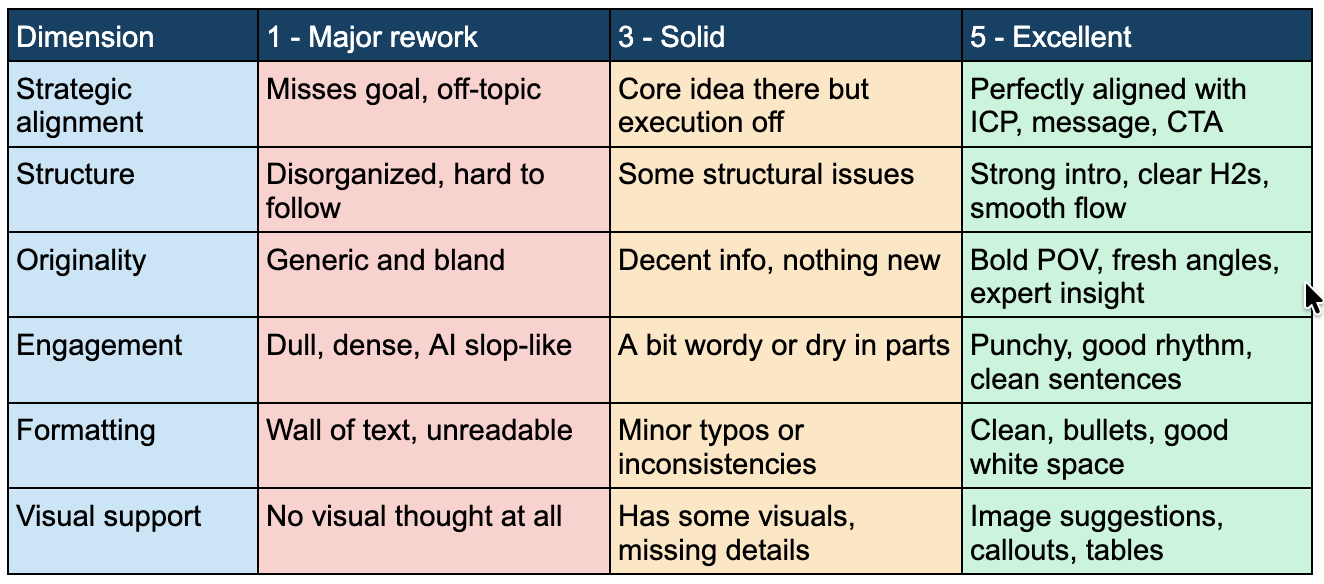

It looked like serious expert content, no doubt. But then, I ran it through our Content Quality Score and it scored 14 out of 30 on the quality dimensions. Originality: 1 out of 5.

When I told the client, they pushed back, they kept saying it was expert and thorough and the team had worked hard on it.

But thoroughness and originality are not the same thing. Most content teams treat them as if they are. So they produce long, comprehensive, well-structured pieces that say nothing a reader couldn't generate with an AI tool in two minutes.

This is the third dimension of the Content Quality Score: Originality. Does this piece offer a POV, insight, or angle that only this company could give?

In today's newsletter:

Before AI, a thorough article on a relevant topic had value. All you needed to do was cover the topic reasonably well. That world is gone.

AI tools can cover any topic thoroughly. What they can't do is draw on your company's proprietary data, cite your clients' specific results, reflect knowledge that comes from years of doing the work, or produce a point of view that comes from experience rather than pattern-matching on what's already online.

A piece that scores 1 on Originality is a piece AI could have written this morning without knowing a single thing about your business.

A lot of B2B content is exactly this. And a lot of content managers don't realize it, because the piece is long and the topic is right and the formatting looks professional.

Originality means the piece contains something that couldn't exist without your company's direct experience or perspective.

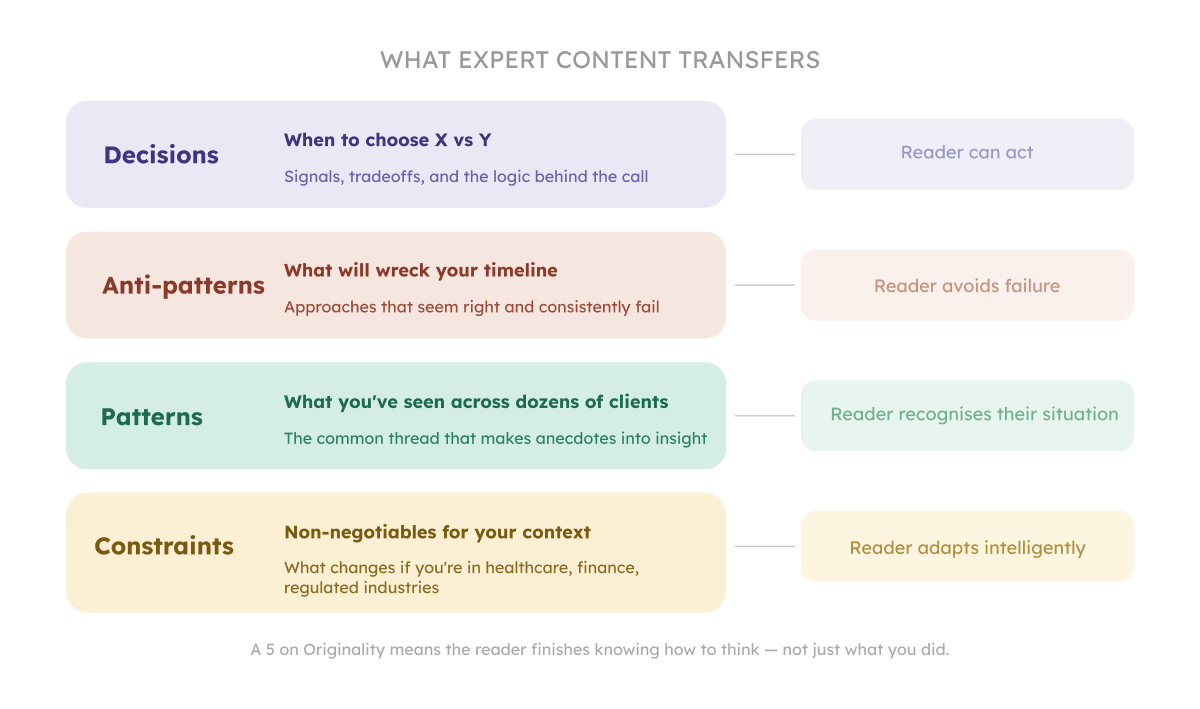

There are four sources:

A piece that scores 5 on Originality has at least one of these, built into the argument.

There are a few dimensions of originality.

Light originality: a contrarian angle on a common practice, a distinctive voice, a first-person account of how you solved a specific problem: these are genuine forms of originality. This is enough to differentiate a piece from the AI-generated fluff, and it doesn't take months to produce. If a piece is built entirely on this light originality, with no proprietary data, client specifics, or operational patterns, it can score a 3. The pieces that score 5 go further. They make claims only your track record can support.

Medium originality: an interview with an internal expert or client that produces insights a generalist writer could not have found. Content built from your team's operational reality rather than assembled from secondary sources.

Heavy originality: proprietary research conducted specifically to support a content piece. This can be an analysis of your own client data across 50+ engagements, or a framework that took two years of delivery work to develop. This kind of piece is hard to produce and harder to replicate. It earns the most.

The question to ask before scoring any piece: where does it sit on that spectrum? And does the score reflect that honestly?

If it starts with a market size stat, a broad industry observation, or a definition of the category your piece is about, the piece probably scores a 1 on Originality.

A strong introduction signals immediately why this piece exists and what it offers that nothing else does. The writer has something specific to say, so they say it from the first line.

A weak introduction warms up with context. The writer has nothing specific to say, so they buy time with information that sounds relevant.

When I receive a draft and the opening line is "The X market is growing rapidly, with global revenue expected to reach $Y by 2027," I already know what the Originality score will be before I read further.

Back to the guide that scored a 1 for Originality.

While they did interview engineers which supposedly should've given them some hard-won operational insights, proprietary frameworks, or client results, that content didn't do what expert content needs to do. Four things were missing:

It described choices instead of explaining how to make them. The guide outlined which approach the company had selected on several projects. It said nothing about when you would choose that approach over an alternative, what signals tell you which path makes sense, or what you're trading off either way. A reader walks away knowing what the company did. Not knowing what they should do.

It documented successful successes. Every methodology was presented as effective. There was no "here are three approaches that seem logical and will wreck your timeline." The hard-won knowledge in any experienced engineering team is what they stopped recommending. That's what belongs in expert content.

It avoided patterns. The guide included two client examples and noted that "every project is unique." Which raises an obvious problem: if every project is unique, the guide has nothing generalized to teach. Expertise is what happens when you work with 47 clients and notice the same issue appearing in 34 of them. That pattern is something a reader can use.

It stayed vague about constraints. "We adapted to client requirements" appeared in multiple places. This is one of the least informative sentences in B2B content. The expert version: "If you're in healthcare or finance, here are the three non-negotiables that shape every architectural decision, and here's why teams who ignore them spend six months walking it back."

I guess the engineers knew all of this. It just didn't make it into the content.

Score: 1 AI could have written this without knowing anything about your business. The writer didn't mention any proprietary data, client results with specifics, operational patterns, or hard-won insights. They just covered the topic comprehensively.

Score: 3 One original element is present but not fully deployed. This can be a stat cited without analysis, a client mentioned without specifics, or a framework described without the reasoning behind how it was built through delivery. Something differentiates the piece from a generic treatment of the topic, but a reader doesn't walk away with something they could only have gotten from you.

Score: 5 The piece makes a claim that only your company's track record can support, and it proves it. A reader could not find this reasoning, this data, or this pattern anywhere else. The originality is structural to the argument.

The Originality dimension shows you the problem. But you still need editorial judgment to know what a 5 looks like.

A content manager who hasn't been trained on what expert content needs to do will read the same guide I scored at 14 and see something credible, thorough, and professionally produced. The score is only as useful as the person using it.

This is the same problem I wrote about a few editions back. Some people genuinely can't tell good writing from bad. With Originality specifically, some content managers can't tell expert content from content that merely looks expert. Developing that judgment takes time and exposure to the real thing.

So the score isn't going to help if nobody on your team has taste.

We have 3 more Content Quality dimensions to score. Don't miss the next issue.

In previous chapters:

Kateryna

P.S. If we aren't connected already, follow me on LinkedIn and Instagram. If you like this newsletter, please refer your friends.

P.P.S. Need help with quality content? Zmistify your content with Zmist & Copy.

Subscribe to From Reads to Leads for real-life stories, marketing wisdom, and career advice delivered to your inbox every Friday.